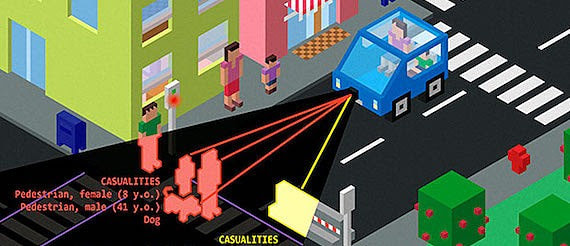

Researchers at the University of Bologna in Italy have carried out some throught-provoking research into the ethics of driverless cars. The concept they’ve come up with is an “ethical knob” that would let the owners of self-driving vehicles choose their car’s ethical setting, allowing them to set the car to sacrifice themselves for the survival of others, or to always sacrifice others in order to protect the vehicle occupants.

The dilemma of how self-driving cars should tackle moral decisions is one of the major problems facing manufacturers. When humans drive cars, instinct governs their reaction to danger. When fatal crashes occur, it is usually clear who is responsible. Of course self-driving cars must rely on code, not instinct meaning that certain ethical considerations will have to designed in to the vehicles’ software in advance.

“With manned cars, in a situation in which the law cannot impose a choice between lives that are of equal importance, such choice rests on the driver, under the protection of the state-of-necessity defence, even in cases in which the driver chooses to safe him or herself at the cost of killing many pedestrians,” the authors wrote. “With pre-programmed AVs, such choice is shifted to the programmer, who would not be protected by the state-of-necessity defence whenever the choice would result in killing many agents rather than one.”

Peoples’ attitudes to the issue are (unsurprisingly) complicated. A 2015 study found that most people think a driverless car should be utilitarian, taking actions to minimise the amount of overall harm, which might mean sacrificing its own passengers in certain situations. But while people agreed to this in principle, they also said they would never get in a car that was prepared to kill them.

“We wanted to explore what would happen if the control and the responsibility for a car’s actions were given back to the driver,” says Guiseppe Contissa at the University of Bologna, who leads the research team. Their solution is a dial that will switch a car’s setting from “full altruist” to “full egoist”, with the middle setting being impartial. They think their ethical knob would work not only for self-driving cars, but for areas of industry that are becoming increasingly autonomous.

“The knob tells an autonomous car the value that the driver gives to his or her life relative to the lives of others,” says Contissa. “The car would use this information to calculate the actions it will execute, taking in to account the probability that the passengers or other parties suffer harm as a consequence of the car’s decision.”

But there are issues with the idea. “If people have too much control over the relative risks the car makes, we could have a Tragedy of the Commons type scenario, in which everyone chooses the maximal self-protective mode,” says Edmond Awad of the MIT Media Lab, lead researcher on the Moral Machine project there.

Another concern is that people may be unwilling to take on moral responsibility. If everybody were to choose the impartial option, the ethical knob will not help with the existing dilemma.

“It is too early to decide whether this would be a good solution,” says Awad. But he welcomes a new idea in an otherwise thorny debate.